Enterprise Grade Technology

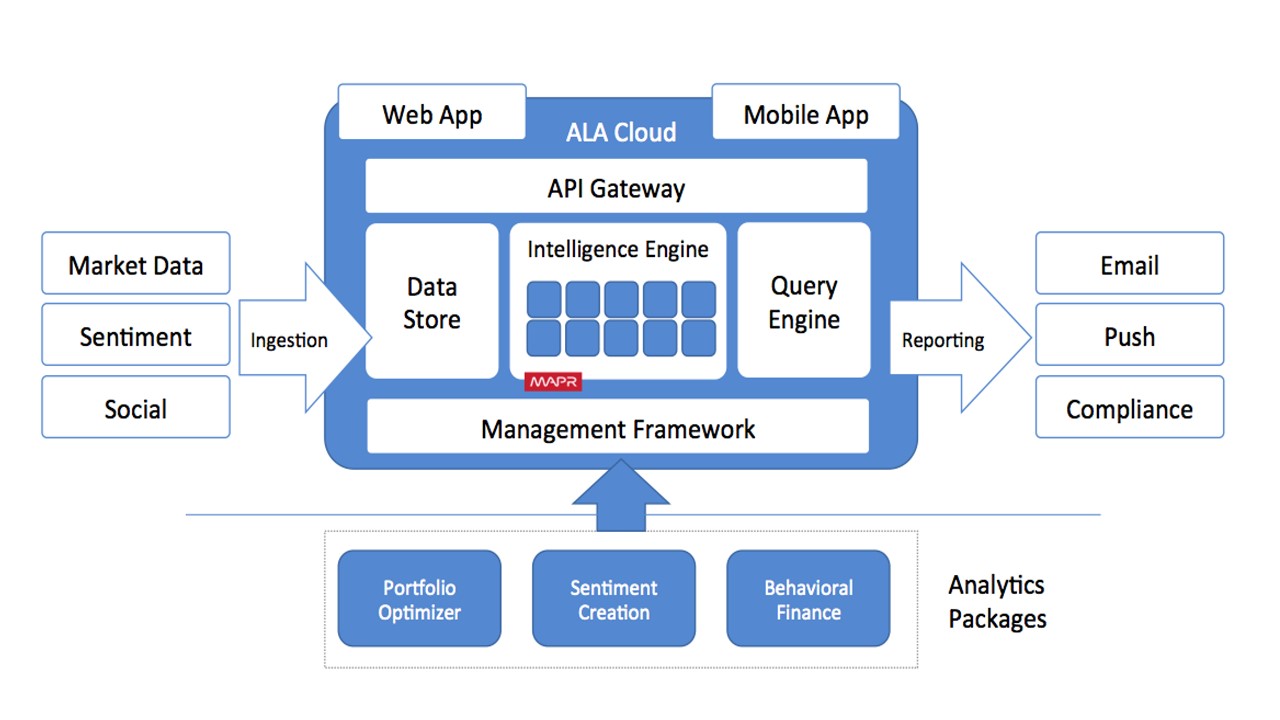

The OneLogic suite of solutions are underpinned by technology capable of processing the vast volumes of data produced today.

ALA utilises the Amazon Web Services (AWS). AWS Cloud provides a broad set of infrastructure services that are delivered as a utility: on-demand, available in seconds, with pay-as-you-go pricing.

The ALA cloud platform leverages AWS as the core infrastructure provider on which various application components run. AWS provides access to affordable and scalable infrastructure and enables us to focus on optimising algorithm execution and data management.

ALA makes use of AWS Machine Learning services for comprehension and Natural Language Processing (NLP) in addition to machine learning tasks on ingested data. AWS Machine Learning is highly scalable and can generate billions of predictions daily and serve those predictions in real time and at high throughput.